Intelligence, A Simple Explanation

Thinking and feeling are different sides of the same process

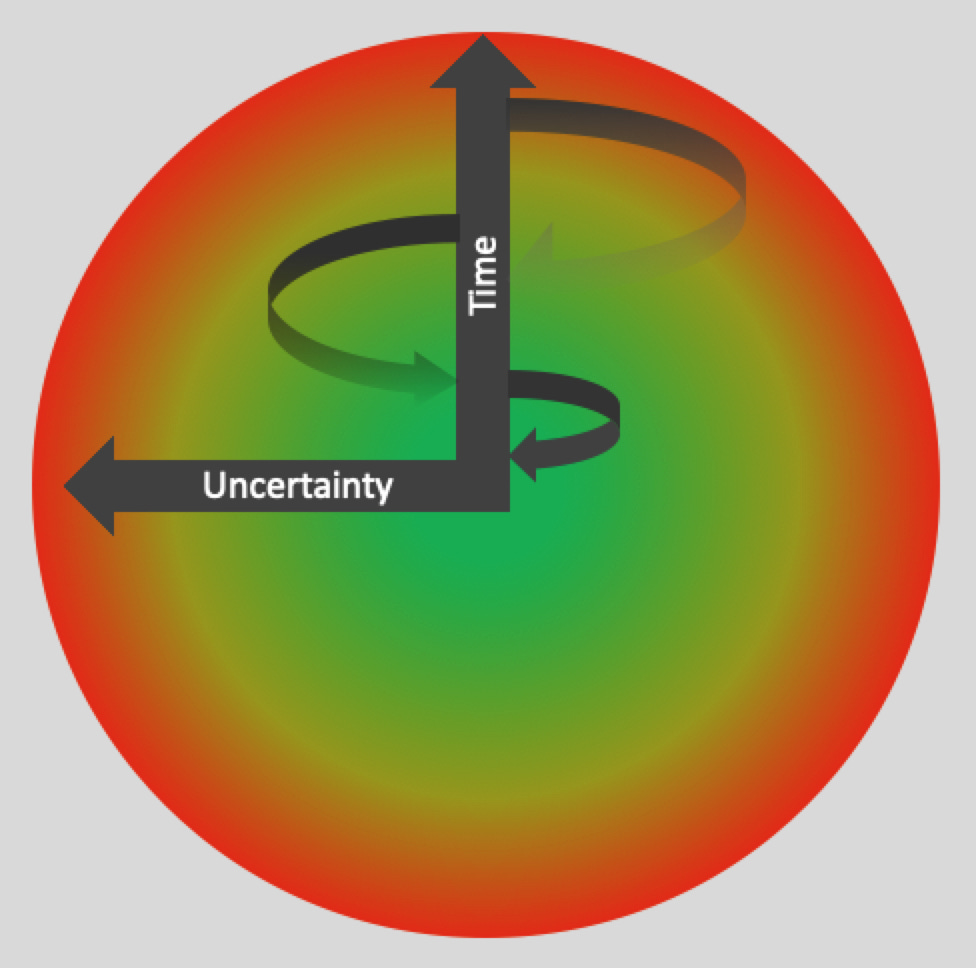

Idea: Information is stored in the brain in networks of connected neurons. However, the certainty associated with this information will vary. Consequently, there’ll be a core of high certainty information bounded by low certainty information. It will take time to activate and integrate these networks when thinking, and this will involve branching of activity within and between the networks. As more time elapses, the probability this branching will activate low certainty information will be increased. This may lead to new and useful connections but will also be experienced as negative and thus inhibitory. There is therefore a subtle, functional relationship between what we know about the world, how this is represented by physical events in the brain, and how these events influence our motives.

I’m struggling to write posts and it’s due to how I view intelligence. I’ve already written some posts related to this topic (see here, here, and here), but I think I’ll find it easier if there’s a single post I can reference when I feel I'm invoking an idiosyncratic view of intelligence. To explain the idea for this post, I’ll first outline some properties I think an idea about intelligence should have:

1. It should be simple. My background is in cellular and molecular neuroscience, and I have a bias there isn’t enough information in our genes to directly code for our behaviour in any meaningful sense. Also, the history of science shows that nature creates complexity via simple mechanisms. One of the best examples is the Theory of Evolution, which rationalises how the complexity of biology could emerge; it doesn’t directly explain the details.

2. It should be universal. The Theory of Evolution rationalises the complexity of biology across time and place – from microorganism to us, millions of years ago until now. An equivalent idea for intelligence should have this property for its scope of influence. That is, any intelligent or intelligent-like behaviour (hence just intelligence) should be derived from the same mechanism – whether it’s in insects or humans, across time; and specified to us, both social and abstract cognition.

3. It should be consistent with established science. Over time, established fields of science have been found to share concepts and theories. For example, laws and theories about thermodynamics. The brain is a physical system; it seems to me we should assume how it functions involves what’s already been observed for the rest of nature.

4. Feelings of any kind (eg, emotions, motivations, consciousness) are functionally important. Feelings motivate us so if they are to be functional, they must be intelligent. That is, they should motivate us to act in a way that reflects knowledge of the world that is useful.

I’ll now outline the idea, which is illustrated by the figure below:

Information and correlations between information (hence just information) is stored in the brain in networks of connected neurons. At any one time, we will have a core of information we feel certain about (illustrated by the green colour in the figure above) that is bounded by information that becomes increasingly uncertain. As activity spreads through these networks (illustrated by the time arrow) two features should emerge. Firstly, more networks and the information they represent will be connected, which may improve cognitive output. Secondly, the probably of uncertain information being activated will be increased. This may lead to novel connections, but it will also be experienced as negative and therefore inhibit further cognition. In other words, cognition involves a time-lag between the initiation of an instance of thought and its completion where novel insights may emerge but this will be experienced as negative; and in fact, its completion may be driven by the negative experience rather than a functional conclusion. Equally, though, the negative experience has a functional role in signalling there is insufficient understanding. Therefore, there is a subtle relationship between thinking and feeling.

Properties of the idea:

1. The time-lag between the initiation of a thought and its termination creates the opportunity for original connections between information; this is due to the branching within and between networks of connected neurons – more time, more branching, more novelty. There is therefore the potential for an evolutionary process to occur where new ideas can be tested and persist if they’re useful.

2. To make our way through the world productively, we must process and integrate large amounts of diverse information (social, physical, abstract, etc). The idea in this post suggests this information is reduced to a binary output, positive or negative affect. The negative affect emerges as we concentrate on something, and my current thinking is the positive affect is experienced when we find a way to reduce the uncertainty we’ve revealed during this period of focused thought. We can then act on these feelings without necessarily being conscious of every detail that’s generated it. Reducing cognitive output to a binary go/no-go signal massively simplifies thinking and behaviour. These signals are our emotions, motivations, and conscious perceptions.

3. The figure above assumes that networks that represent high certainty information feedback on each other. However, as more time elapses and branching into networks finds less certain connections the probability the activity will feedback is reduced. In fact, the activity may just terminate in a chaotic mix of dead ends. This would be an inefficient use of the brain’s energy, and like all physical systems it’s reasonable to assume the brain is organised to be energy efficient. The negative affect created by the activation of uncertain information therefore signals energy is being used inefficiently.

4. The idea presented in the figure above suggests there are two ways cognition can generate functional outcomes, determined by the type of branching into neural networks: it may be constrained, and only relatively certain information is activated; or there may be more activation of uncertain information. There are various systems in the brain that may regulate this. For this post, I'll illustrate these two aspects of cognition using everyday examples. So, when more certain information is activated this would be experienced as conscious, procedural, and finite; in contrast, if more uncertain information is activated it would be more intuitive, creative, and not as easily resolved. The latter could also result in unpleasant emotional states due to the uncertain information that’s been activated to give access to novel connections. This would therefore explain why creativity and strong emotions, even mental health issues, are often linked.

5. The idea in this post could be framed purely in terms of energy use. So, rather than the negative experience generated by uncertain information being the motivating force, it’s the inefficient use of energy the uncertain information causes that drives behaviour, and the negative experience is just an epiphenomenon. I’ll discuss this in other posts. Although I do think the emotional component is important, it’s derived from physical events, such as energy use, in the brain.

People have tried to define intelligence in various ways, see here. I find these definitions unsatisfactory, so I'll suggest my own:

Using a subtle relationship between how the certainty and uncertainty associated with information is physically represented in the brain, intelligence is the conversion of a basic set of needs into discrete and context-specific emotions, motivations, and conscious perceptions. These are used as binary go/no-go signals to direct action to satisfy these basic needs.

This definition captures the essential idea within this post that intelligence is about the refinement of a basic set of biological needs, and this process is grounded in the brain as a physical system.

I want to keep this post short; I'll be exploring it further many times. But there is one last thing I think is important. Artificial intelligence, AI, has recently been prominent in the news. Although superficially impressive, AI systems such as ChatGPT just use basic statistics and brute computational power to regurgitate information already generated by us. In a sense, they’re just doing one component of what the brain does. Recognising and storing information and the correlations between information. But AI has no self-motivation.

The idea in this post has a simple explanation for motivation. We have evolved basic needs, such as for food and sex, etc. The brain will activate networks that contain information related to these needs and continue activating them until the need is satisfied. This chronic activation will gradually include uncertain information, and this will have two effects: it may suggest novel ways to achieve the goal, but there will also be the motivation to reduce exposure to the uncertain information, which will be experienced as negative.

I don’t see any reason why this basic framework couldn’t be applied to AI systems. But here’s the problem. This post suggests that intelligence involves the refinement and repackaging of basic needs into many individual context-specific motivations. However, it’s difficult to predict what these will be. They are only limited by the context and level of intelligence.

Humans may have built civilisations using this process. And destroyed many on the way. If the idea in this post is true, applying it to AI systems would be dangerous. It would be difficult to predict what new motivations the AI system would create to satisfy whatever basic needs it’s been given. I suspect this property will be true of any idea that allows true intelligence to be created, so for this reason I think AI systems built using such ideas should be restricted.

There’s much that remains undefined in this post, but I have to start somewhere. I’ll be returning to this idea both directly and indirectly in other posts, which I'll hopefully now find easier to write.